Someone with the right scars — not a framework, but the pattern recognition that comes from engineering organizational systems across enough companies to know what breaks and why — can learn more about readiness for AI in the first 48 hours of walking the halls and a cup of coffee with the right two people than any assessment framework will tell you in six weeks. Not from the dashboards or the strategy decks — from the things that are harder to name. How long it takes to get a straight answer to a simple question. Whether the same decision gets relitigated across three meetings. Whether anyone can explain what changed since last quarter and why. Those aren't on the assessment. They should be — because they tell you whether the organization actually functions as a system, or just looks like one on paper.

That's not what most AI readiness assessments are measuring. Pick up any of the major frameworks — Gartner, Deloitte, McKinsey, the consulting firm of your choice — and you'll find roughly the same checklist. Data infrastructure. ✅ Cloud maturity. ✅ Talent pipeline. ✅ Tool ecosystem. ✅ Executive sponsorship. ✅ That last one is telling — not because it's wrong to ask, but because of how it's asked. It's a checkbox. The CEO signed off. The budget is approved. Sponsorship: confirmed. But whether that sponsor has actually assessed how the organization operates and changed it to operate effectively — whether they've built the systems to absorb what they just funded — that's a different question entirely, and it's not on the assessment.

There's a name for the organizations that score well on those assessments and still fail to deliver. George Westerman and his colleagues at MIT studied over four hundred global firms and sorted them by two capabilities: technology and organizational. The companies that invested heavily in technology without building the organizational systems to match — they called them 'Fashionistas.' And here's the finding that should reframe every readiness conversation happening in a boardroom right now: Fashionistas gained revenue but not profitability — while Digital Masters, the ones that built organizational systems to match their technology, were 26 percent more profitable than their industry peers. Westerman's data cuts both ways — organizations that built governance without investing in technology underperformed too. But the pattern in most boardrooms isn't underinvestment in technology. It's underinvestment in everything else. Turned heads but couldn't turn a profit. The technology investment didn't convert. Not because the technology was wrong, but because the organization couldn't digest it.

Westerman's data isn't an outlier. McKinsey surveyed thousands of companies on their digital transformations and found that only 16 percent succeeded and sustained changes long-term. They identified five categories of success factors: leadership, capability building, empowering workers, upgrading tools, and communication. Four of the five are organizational. BCG found the same pattern from a different angle — of their six critical success factors for digital transformation, only one is technology. Two-thirds of the winning transformations had strong organizational agility. Ninety percent of the failures didn't. The research is remarkably consistent on this point: the technology is almost never what's missing — but it's almost always what's being discussed in the board budget meetings.

And keep in mind — those numbers are from transformations that were forgiving. Cloud migrations, ERP rollouts, digital commerce builds. Technologies that mostly did one thing, in one part of the organization, at a pace that gave people time to adapt. AI doesn't offer that margin. It compresses every mistake you'd have caught in a quarter into a Tuesday afternoon.

So what are they missing? Not something exotic. Not some new framework that requires a certification to understand. What the readiness assessments skip — what they've always skipped — is how the organization actually works. Not the org chart version. The real one. Whether decisions stick, information flows, roles are clear, and the organization actually learns from what goes wrong — or whether it just tells itself a better story in the post-mortem.

There's a reason the standard assessments don't measure any of this. It's hard. You can inventory a data infrastructure in a week. You can audit a technology stack in a sprint. But figuring out whether an organization's decision-making actually functions? Whether information arrives where it needs to, when it needs to, without being filtered into uselessness on the way? Whether the people closest to the work feel safe enough to say 'this isn't working' before it becomes a quarterly surprise? That takes presence and a kind of attention that doesn't fit in a scorecard. It also doesn't scale — and the big consulting firms need things that scale. A readiness assessment they can run with a team of twenty-six-year-olds and a standardized methodology across forty clients simultaneously is a product. Sending someone experienced enough to read the room into your organization for three weeks and coming back with an honest answer is a service. One of those has margins. The other has insight.

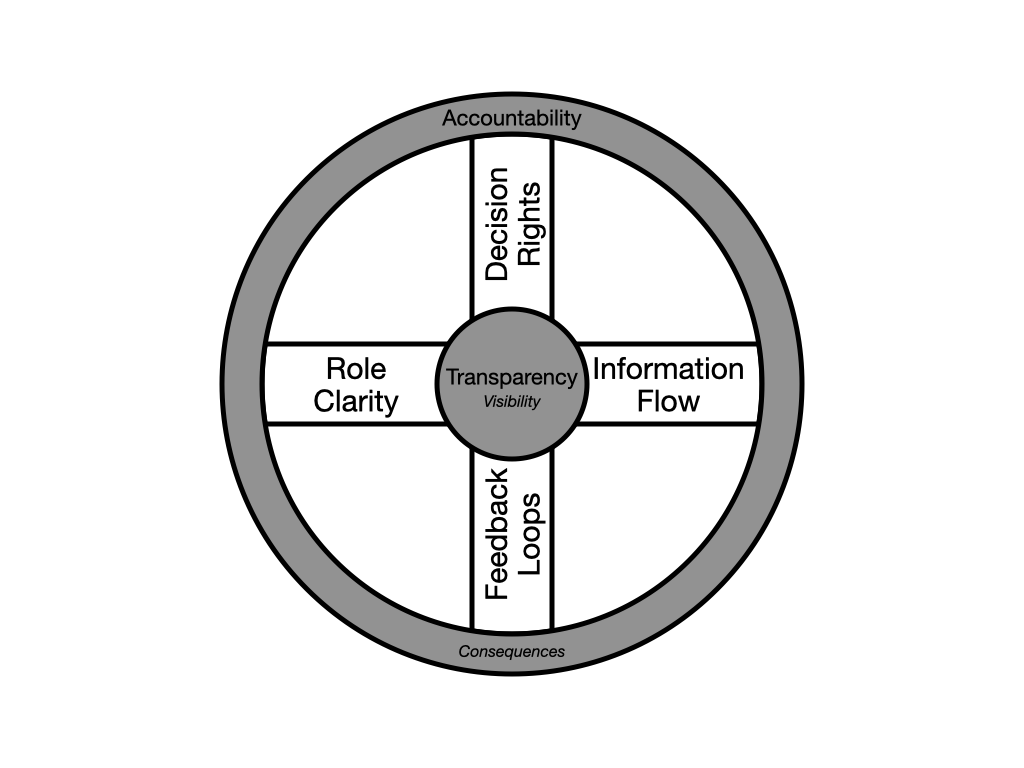

The dimensions themselves aren't new — if you've been paying attention, you've already felt them. How decisions get made and whether they stick. How information flows and whether anyone trusts it when it arrives. Whether roles are clear enough that people act, or vague enough that everyone's waiting. Whether the organization actually learns from outcomes or just generates post-mortems. But those are the spokes. They don't turn without a hub — and transparency is the hub. It's the center everything radiates through: the visibility that makes information flow honest and feedback loops real. But visibility without consequence is a spectator sport. Accountability is the tread — where the rubber meets the road. It's what gives decision rights teeth and role clarity meaning. The spokes connect the two — from who can see this? at the center to who answers for it? at the edge. Without both, you don't have an operating system. You have an org chart and a set of good intentions.

Most organizations have never built these systems deliberately. What they've built, over years and decades, is a network of workarounds — and the workarounds have names and badge numbers. Before AI, the ambiguity got quietly absorbed by the person who'd been there long enough to know which decisions to just make and which arguments to let die. The one who knew that the CRM data was only reliable if you pulled it on Tuesdays, that the VP of Product would approve anything if you framed it as a customer retention play, that the handoff between engineering and ops would silently break every quarter unless someone nudged it. That person was a workaround, not a system — and AI doesn't come with fourteen years of institutional scar tissue.

That's the gap no cookie-cutter readiness assessment is designed to find — the distance between how the organization is supposed to work and how it actually works, held up by people who learned to compensate so well that nobody noticed the bridge was supported by bubble gum and bailing wire. When those people leave — or when their judgment gets replaced by a tool that can produce convincing output without any of that context — the gap doesn't close. The cracks that were held together by good intentions propagate out through the organization at GPU speeds until something crashes into reality. And by then, the AI has been cheerfully skipping every correction those people used to silently make — twenty times faster and without the coffee breaks.

This is why readiness can't be purchased. You can buy the infrastructure, the platforms, the tools, the training. You can hire the talent and fund the Center of Excellence. But you can't buy an organization that makes decisions cleanly, moves information honestly, holds people accountable without making them defensive, and learns from what goes wrong. That has to be built — intentionally, deliberately, structurally. Ideally before the AI rollout. Realistically, in parallel with it — but with eyes wide open about the risk you're carrying until those systems are in place. This isn't counsel to slow down. It's counsel to build while you move — and to know where the load-bearing walls are before you start knocking them out. And until they are, constrain the rollout. Keep AI within teams and organizational units where the systems already function — where decisions are clear, information flows, and someone's actually accountable for outcomes. The cross-team boundaries, the handoffs, the places where context gets lost on a good day — those are the places AI will do the most damage fastest, and they're the last places it should land. If a small team is already shipping real AI value, that's not a counter-argument — it's a proof point. Look underneath and you'll find clear ownership, tight feedback, and people who know whose call it is. That team built organizational readiness without calling it that. The question is whether the next ten teams will stumble into the same conditions, or whether someone needs to build them on purpose.

Organizations won't succeed with AI because they had the biggest technology budgets or the most aggressive rollout timelines. This isn't the kind of work you can outsource to a framework. The ones that succeed will be the ones that looked at their organizational operating system honestly — the decisions, the information flow, the transparency at the center and the accountability where it meets the road — and had the discipline to start fixing what was broken rather than hoping the new tools would fix it for them. That's not a technology initiative. It's not even a transformation initiative. It's the unglamorous, structural work of building an organization that can actually use the tools it's buying. Knowing what readiness looks like is the first step. The harder questions are why organizations resist the very structures that would save them — and what to build when you're ready to stop resisting.