The layoffs funded the AI budget. That's not cynicism — it's arithmetic. Three rounds of restructuring freed up the headcount dollars that became the infrastructure line item, and the board approved it because the pitch deck showed a productivity curve that made the math work. What the pitch deck didn't show was what those people were actually doing. Not their job descriptions — what they were doing. Absorbing ambiguity. Translating between teams that had never agreed on what the same words meant. Making decisions that weren't officially theirs to make, because the actual decision rights were so unclear that someone had to, and they'd been there long enough to know which calls to just make and which fights to let die. They weren't a system. They were a workaround. And the AI that inherited their org inherited a system that had been running on workarounds instead of structure — except the workarounds left with a box in their hands and a pink slip in their pocket.

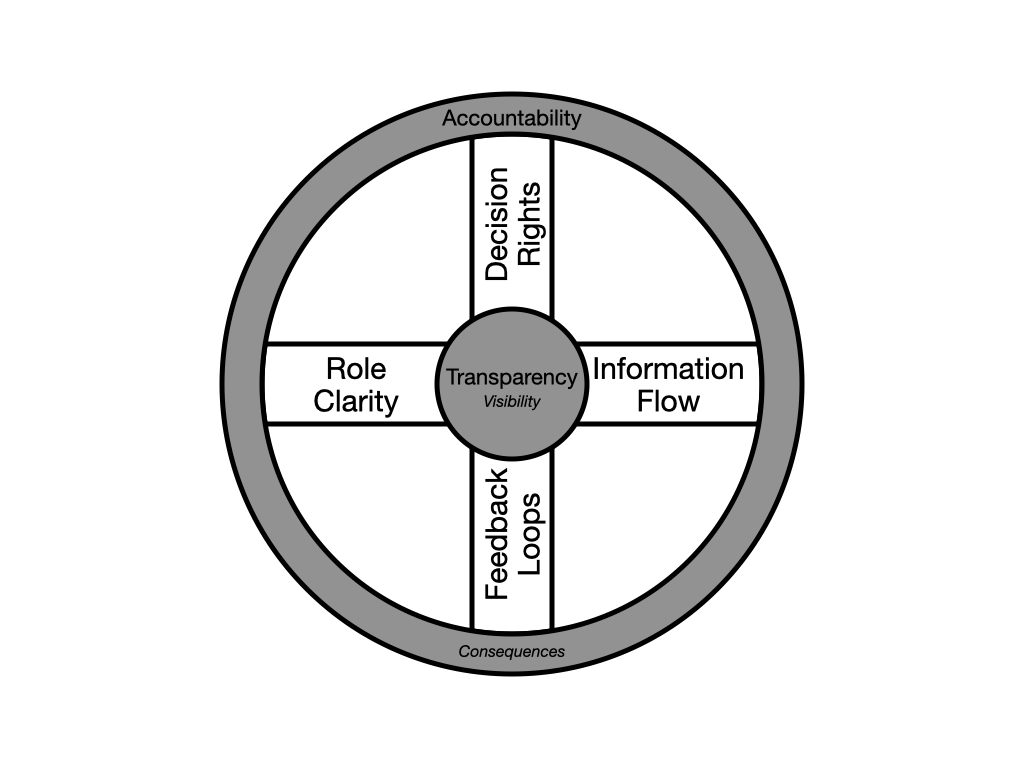

So what do you do instead? Over four articles, we've built the case — AI amplifies what's already there, organizational readiness isn't a technical checklist, and the constraints your teams need are the ones you build together. The hub-and-spoke model from Article 3 gave you the architecture: transparency at the hub, accountability at the tread, with decision rights, information flow, role clarity, and feedback loops as the spokes connecting visibility to consequence. Now it's time to talk about what it actually looks like to build it.

Here's what most frameworks get wrong: they hand you a list. Start with decision rights. Then move to information flow. Check the boxes, move on. But organizational systems don't work in sequence — they work in tension. Pull one and the others move. These aren't rungs on a ladder you climb one at a time. They're spokes on a wheel — and the work starts at the center and reaches all the way to where the rubber meets the road.

Transparency and accountability aren't a phase. They're the conditions that make everything else honest. If they're not in the atmosphere the organization breathes every day — not aspirationally, not in the values statement, but in practice — then something is off in the air, and every decision, every handoff, every conversation carries it — long before anyone can name why.

Transparency is the hub — the center everything radiates through. It means the people affected by a decision can see how it was made — not after the fact in a deck that got sanitized on the way up, but in real time, in terms they can act on. McKinsey found that organizations where leaders clearly communicate the case for change are more than five times more likely to achieve a successful transformation — not because communication is magic, but because it enables the clarity that makes everything else possible. Without it, the spokes are invisible. People operate in the dark, building on assumptions they can't verify about decisions they can't see.

Accountability is the tread — where the rubber meets the road. It means someone specific answers for the outcome — not a team, not a committee, not a RACI chart that gives everyone enough coverage to point somewhere else when it goes sideways. A person. Named. With the authority to actually make the call they're being held responsible for. Without the tread, the wheel spins but goes nowhere. Decisions get made but nothing lands. Information flows but nobody acts. The system looks like it's working right up until someone asks what it produced.

If you don't have both, everything that follows is theater — captivating but without substance. You can define decision rights beautifully, but without transparency, nobody knows whether they're being followed. You can map information flow with surgical precision, but without accountability, nobody owns what happens when the information arrives too late. The hub lets you see. The tread gives you grip. And the spokes connecting them are where the actual work lives — radiating from who can see this? at the center to who answers for it? at the edge. Without both, you get motion without traction — everyone's busy, nobody can tell you what it added up to.

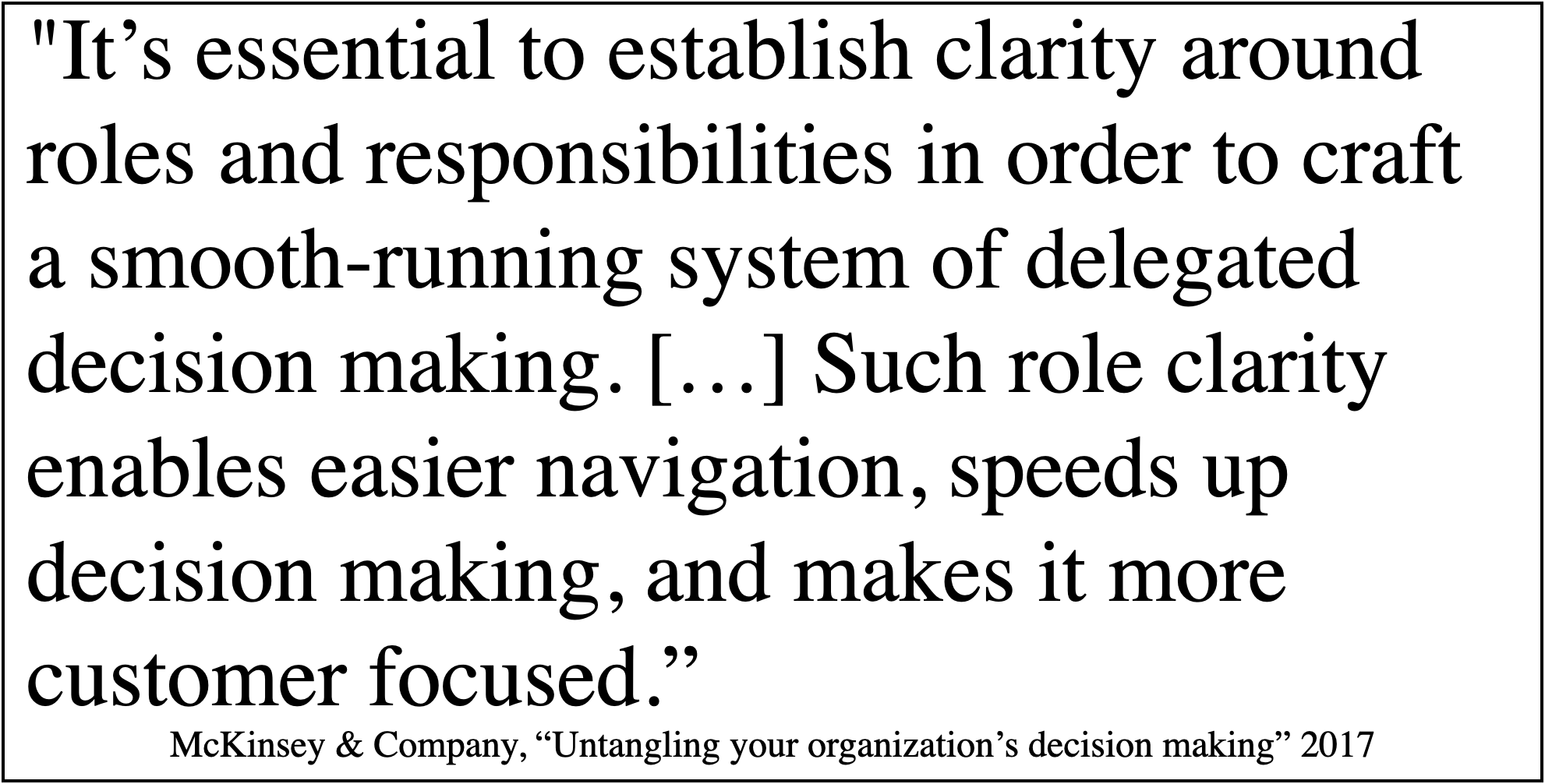

Decision rights are where most organizations think they've already done the work. There's an org chart. There are titles. Somebody's VP of something. But org charts describe reporting lines, not decisions — and the gap between those two things is where AI adoption goes to stall. The job description used to recruit someone describes what a candidate needed to have — qualifications, experience, domain knowledge. It says nothing about how they act once they're in the seat. Decision rights are about action: who can say yes, who can say no, and what happens when they disagree. Rogers and Blenko found that only about 15% of companies practice effective decision-making — and the common assumption that moving faster means deciding worse turns out to be backwards. McKinsey's research showed that speed and quality of decisions correlate, not trade off — organizations with both see two and a half times the growth. The bottleneck isn't careful deliberation. It's ambiguity about who deliberates.

Doing this work means getting specific to the point of discomfort. Not "the product team owns product decisions" — that's a tautology, not a framework. Which decisions? At what scale? A product lead who can greenlight a UX change but needs three levels of approval to adjust a data pipeline isn't empowered — they're decorative. And the moment you hand that team an AI tool that can generate recommendations across both domains, the ambiguity doesn't just persist. It accelerates — and expands to cover every crack it can reach. The tool produces output at a pace the informal "go ask Sarah" system was never built to handle.

The work is a series of conversations that most organizations have never had — or had once and laminated the results. You sit down with the people who are actually in the decision path. Not their managers' version of the decision path — the real one. Who did you go to last time this kind of call came up? Why them? What happened when you disagreed? Where did it get stuck? You're mapping the actual system, not the org chart's fantasy of it. And you're doing it with leadership in the room — not to approve, but to hear the gap between what they designed and what's actually running.

What comes out of those conversations is specific. This person owns this class of decision up to this threshold. Above that, it escalates here — and "here" is a name, not a role. When two owners disagree, this is the tiebreak mechanism — not "escalate to leadership," which is code for "argue until someone with a bigger title gets tired of it." And critically: the people who live downstream of these decisions can see how they're being made. That's the hub and tread. If the people affected can't see it, it isn't a system. It's a secret. And secrets are poison to organizational effectiveness.

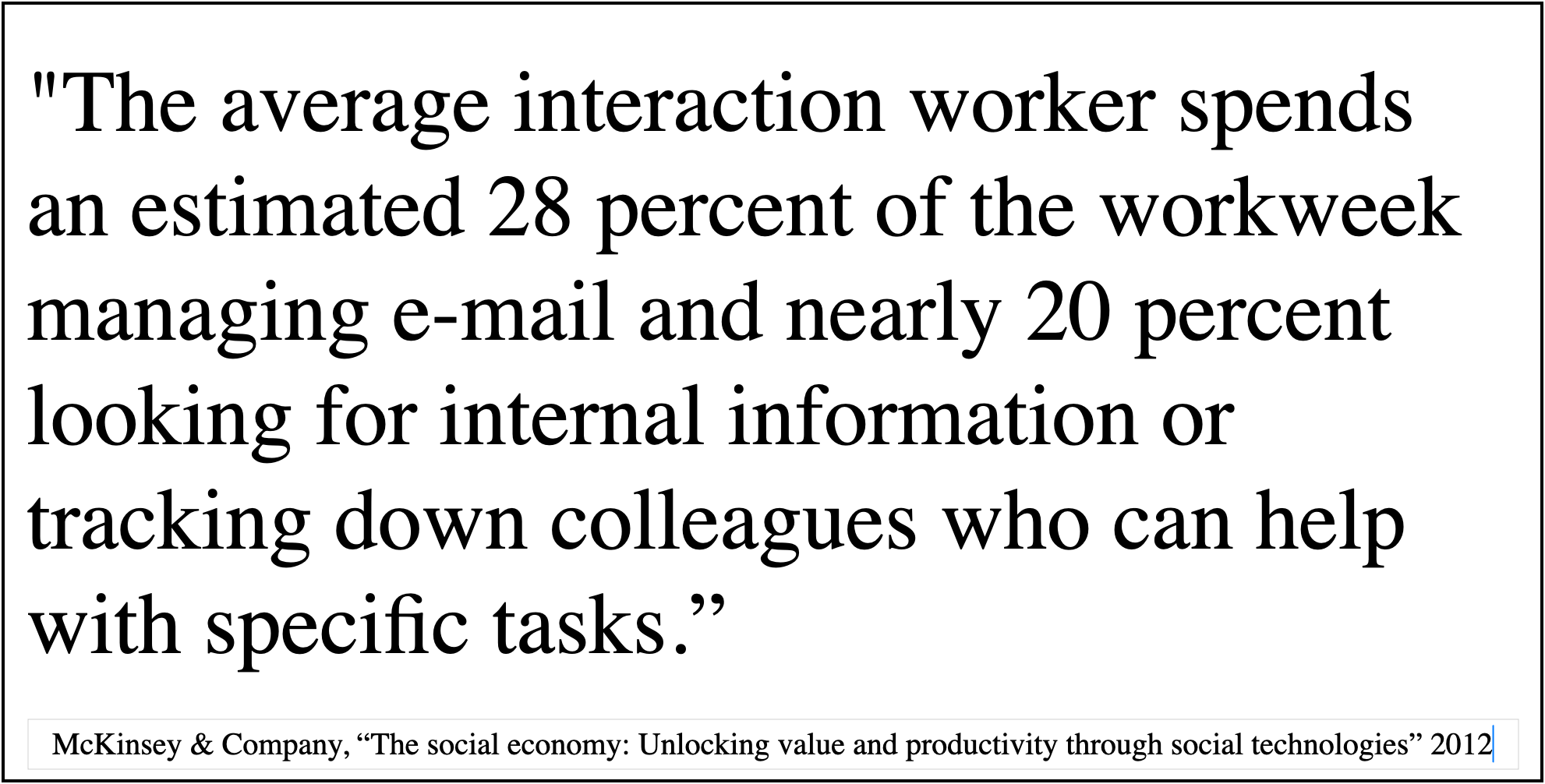

Information flow is the spoke where every organization overestimates itself. We have Slack. We have dashboards. We have a wiki. The information is available. But available isn't the same as flowing — and the difference will bury your AI initiative before anyone realizes it's in trouble.

The question isn't whether information exists. It's whether the right information reaches the right person at the right time in a form they can act on. And "right" is doing a lot of work in that sentence, because it's defined by the decision rights you just mapped. Once you know who decides what, you can see — for the first time, usually — what those people actually need to see, and when. What you find is almost always the same thing: they're drowning in information they don't need and starving for information they do. McKinsey found that the average knowledge worker spends nearly 20% of their time — roughly a full day per week — just searching for and gathering information. Think about what that means at scale: hire five people, and effectively only four show up to do the work. The fifth is off looking for answers. The dashboards are built for the people who commissioned them, not the people who use them. The wiki is a graveyard of good intentions. And the real information — the stuff that actually drives decisions — moves through back channels, side conversations, and the institutional memory of people who know which Slack channel actually matters and where that email is that they need to produce the quarterly budget review deck.

Fixing information flow starts the same way decision rights did — with the actual state, not the intended one. You map it by asking the people who make decisions what they needed the last time they made one, and where they got it. Not where it was supposed to come from. Where it actually came from. The answers are almost never what leadership expects. The CTO learns that the engineering leads get their critical context from a group chat that doesn't include him. The product team discovers that the customer data they base their roadmap on is three weeks stale by the time it reaches them because it routes through a reporting chain that adds latency at every layer.

Then you redesign with the decision rights as your blueprint. Every decision you mapped in the previous step has an information requirement. What does this person need to see, in what form, on what cadence, to make this call well? And who's accountable — there's the tread — for making sure it gets there? Not "the system handles it." Not "it's in the dashboard." A person who owns the flow and answers for it when the decision-maker is flying blind.

Here's the thing that makes information flow the spoke with the highest AI upside — if the structural work is done first. Once you've mapped who provides what information, what context it carries, and who needs which pieces of it merged with other data relevant to their decisions — the AI can make those connections at a speed and scale no human system can match. The right data reaching the right person at the right moment, shaped to their context, synthesized with the three other inputs they need to make the call. Not because someone remembered to forward an email or knew which dashboard to check, but because the system understands the source, the destination, and the contextual requirements of both.

But — and this is the part that keeps getting skipped — for any of that to work, you have to have done the work. The AI needs something real to connect to. Without clear decision rights defining who needs what, without mapped information flows defining what context matters and when, the AI doesn't accelerate anything. It just produces threads of information that end up in a tangled mess around everyone's ankles as they trip over what was supposed to be accelerating them.

And this is where the iteration starts. You've just redesigned information flow based on the decision rights you mapped. But the act of redesigning it surfaces problems in the decision rights themselves. You discover that two people have overlapping authority because they were each making calls based on different information — and now that they're seeing the same data, someone needs to own the tiebreak. Or you find that a decision right you assigned to a director doesn't make sense anymore, because the information they need to make that call well only exists at the team level — so either the information has to travel up, adding latency, or the decision right has to travel down. Either way, something you thought you'd finished just reopened.

This is the part that makes leadership uncomfortable. It looks like rework. It feels like the process is broken. But it's the opposite — it's the process working. A framework that doesn't force you back to revisit earlier assumptions isn't rigorous. It's wallpaper.

Role clarity sounds like the simplest spoke. Everyone has a job description. But job descriptions describe what you were hired to do, not what you actually do — and in most organizations, the delta between those two things is where the real work lives. Meta-analyses of organizational behavior consistently find that role ambiguity is among the strongest predictors of reduced job performance — not through idleness, but through duplication, conflict resolution, and the invisible tax of people negotiating boundaries that should have been defined. The person whose title says "senior engineer" but who actually spends half their time chasing down deployment approvals because the sign-off process has three owners and none of them agree on the threshold. The project manager who's officially coordinating timelines but is really the only person who knows which stakeholders need to be in which room. The work that keeps the machine running but doesn't show up in any system, any review, any capacity plan.

Role clarity isn't about rewriting job descriptions. It's about surfacing the work that's actually happening and deciding — deliberately, in a room with the people doing it — what's essential, what's accidental, and what only exists because something else is broken. That third category is the one that matters most, because it's the one AI will expose. When you automate a workflow and the output is wrong in ways nobody predicted, it's almost always because someone was quietly adjusting for a gap that was never acknowledged. They weren't in the process. They weren't in the documentation. They were just there — until they weren't.

This is where the work gets personal. Defining role boundaries means telling people — explicitly — what isn't theirs. And if the trust isn't there, if leadership hasn't earned credibility through the process of building these constraints together, the boundaries don't feel like clarity. They feel like shackles. The pushback is predictable: you're limiting me. You're boxing me in. I used to be able to help with that. And the empathetic leader — the one who genuinely cares about their people — hears that and pulls back. Restores the ambiguity. Feels magnanimous about returning the freedom. And the failure starts baking again, right on schedule.

The leader with vision does something harder. They sit down, one on one, with the person who's pushing back — and they paint the picture. Not the constraints. The other side of them. Here's what it looks like when you're not spending half your energy on work that isn't yours. Here's what happens when your creativity is aimed at a problem you actually own, with the authority to solve it and the clarity to know what good looks like. Not a cage. A race car. Because everything that made them valuable — the instinct, the pattern recognition, the ability to see around corners — is still there. It's just pointed somewhere now.

The work itself is an audit that no org chart can give you. You sit with each team — not in a town hall, not in a survey, in a room — and you ask: what do you actually do in a given week? Not your job description. Your week. Where do you spend time that you don't think is yours? Where do other people step into territory you thought you owned? Where are you making judgment calls that nobody asked you to make, because if you didn't, they wouldn't get made?

Then you put that map next to the decision rights and the information flows you've already built. The overlaps become visible. Two people both think they own the same handoff. A critical function lives entirely inside one person's informal routine — nothing upstream or downstream knows it's there. A team lead is spending a third of their time on work that belongs to a role that was eliminated in the last reorg and never redistributed. You're not building role clarity from scratch. You're excavating it from what's already there and making it explicit, durable, and — here's the hub — visible to everyone it affects. And then asking — here's the tread — who answers for it when it drifts.

And here's where most consultants would tell you you're done — you've had the meeting, you've mapped the roles, ship it. But the map you get in a conference room is the one people can articulate under fluorescent lights with their manager two chairs away. It's not wrong. It's incomplete. The real map lives in the moments nobody thinks to mention — the split-second judgment calls, the reflexive reach across a boundary, the thing you do on autopilot on a Tuesday afternoon that you'd never think to list in a meeting on a Thursday morning.

So you don't end with the meeting. You end with an assignment: go work the way you normally work for the next two weeks. But this time, every time you make one of those calls — every time you step into a gap, route around a process, or make a decision you're not sure is yours — write it down. Don't judge it. Don't fix it. Just notice it. Then you come back together and compare what people wrote down with what they said in the room. The delta is where the real work is. And it's almost always bigger than anyone expected, because these habits are invisible to the people who have them. They're load-bearing instincts, not conscious choices.

Feedback loops are the spoke that tells you whether any of the other three are actually working. Decision rights, information flow, role clarity — you can build all of them beautifully and still fail, because without feedback, you've built a system that can't learn. And a system that can't learn is a system that's decaying from the moment you finish building it.

The mistake most organizations make is picking a cadence and calling it done. Quarterly reviews. Annual planning. But consequences don't operate on a single timescale, and neither can feedback. Adobe found this when they eliminated annual reviews entirely in favor of continuous check-ins — voluntary turnover dropped 30% and managers reclaimed over 80,000 hours previously spent on the old process. Not because feedback became easier. Because it became timely enough to act on. Some failures are visible tomorrow — a bad deployment, a missed handoff, a decision that blew up in a standup. Some don't surface for months — a slow drift in data quality, a team gradually building on assumptions that were wrong from the start, a customer segment quietly leaving. You need feedback loops at every layer — daily, weekly, monthly, quarterly, annual — and they need to connect. The daily loop catches what it can. The weekly loop catches patterns the daily loop missed. The quarterly loop surfaces structural problems that only become visible over time. And the annual loop asks whether the whole system is still pointed at the right outcomes. Without the layering, you're either drowning in noise or flying blind between checkpoints. Without consistency across layers, the loops contradict each other and people learn to game whichever one their boss actually reads.

This is where AI changes the stakes. People have a natural aversion to mistakes, because mistakes cost them — reputation, credibility, sometimes their job. That aversion is an imperfect feedback mechanism, but it's a real one. It creates caution where caution is warranted. AI has no such mechanism. An AI that makes a serious mistake apologizes, promises to do better, and gets right back to exactly what it was doing. There's no flinch. No career consequence. No sleepless night. Which means the feedback loop can't be left to natural consequences — it has to be engineered with the same rigor you brought to every other spoke. Who reviews AI output before it shapes a decision? How do you detect drift when the results are plausible but subtly wrong? And who's accountable — named, personally — when bad output isn't caught? Not just accountable in the "gets blamed" sense. Accountable in the sense that matters: with the authority to change the process, adjust the tool, or shut it down if the output isn't meeting the standard.

Building feedback loops means asking a different question than the other spokes. Decision rights, information flow, and role clarity all ask what should this look like? Feedback loops ask how will we know if it's working — and what do we do when it isn't?

You design them with the same people in the same rooms. For every decision right you mapped, there's a feedback question: how do we know this decision was a good one, and on what timescale? For every information flow, there's another: is the right information arriving in time, and how would we know if it stopped? For every role boundary, another: is this person doing what we agreed they'd do, and what happens when the work drifts? The answers become the instrumentation of the system — not a dashboard someone builds and nobody checks, but specific checkpoints owned by specific people at specific cadences. And when a checkpoint surfaces a problem, there's a defined path to act on it. Not "raise it in the next all-hands." A named owner, a timeframe, a mechanism.

Here's the part that nobody warns you about: you're not done. The wheel needs to keep turning. Not at the pace of the initial build — not burdened by the full weight of bootstrapping an organization's structural foundation from scratch — but in motion and under load. You've mapped decision rights, redesigned information flow, excavated role clarity, and instrumented feedback loops. Each one involved real conversations with real people and produced something specific and durable. And the moment you step back and look at the whole system, you realize that half of it needs to move.

It's not because the work was wrong. It's because the work was honest. When you built decision rights, you made assumptions about who had what information. Now that you've mapped information flow, some of those assumptions don't hold. A role you defined as "owns the customer escalation process" turns out to depend on three information flows that were never formalized — they were running through the person who left in the second round of layoffs. When you excavated role clarity, you found people doing work that bridges two decision domains you'd mapped separately. Now you need to decide: is that a role, or is that a gap in the decision rights? And when you instrumented feedback loops, you discovered that some of the checkpoints you designed require information flows that don't exist yet — because nobody needed them until the feedback structure made them visible.

This is the system working, not the system failing. Every spoke you built illuminated something about the others that wasn't visible before. That's what interconnection means in practice — not a diagram with arrows between boxes, but the lived experience of pulling one thread and watching three others shift.

And the iteration doesn't end when the system is built. Organizations aren't static. Priorities shift. People get promoted, leave, move teams. A decision right that made sense six months ago doesn't fit anymore because the person who held it is gone and the person who inherited it doesn't have the same context. An information flow that worked when the team was twelve people breaks at thirty. A role boundary that was clear when there was one AI tool in the workflow gets blurry when there are four.

But this is the difference between an organization that did the work and one that didn't. When something shifts, you're not starting from scratch. You're pulling on a thread you already understand and watching what else moves — because you mapped the connections. You know the impact radius. You can see that promoting your lead engineer into a director role doesn't just change a reporting line — it shifts a decision right, which changes an information flow, which means a feedback loop that was calibrated to one cadence now needs to operate at a different one. You see that before things start to slip, not after. And the adjustment is a tweak, not a rebuild.

This work isn't the shiny thing. It's not going to show up in a keynote or make the cover of a tech magazine. Investors aren't looking for companies that demonstrate excellence in organizational design. It's a slog, even under the best circumstances. But the companies that do this work will be the ones whose AI investments are still producing results in year three — when everyone else is writing theirs off. And if you haven't put serious effort into your organization's real operating system — the one that actually runs the place, not the one on the slide — then it doesn't matter how good your AI strategy is. The board approved the budget for a jet engine. What you feed it is up to you. Feed it the sludge from the sink trap at your corner deli, and you don't have a jet engine anymore. You have a very expensive anchor — and it will grind your teams to a halt while the board watches from the deck wondering where their investment went.

If you're reading this and thinking I believe you, but what do I do Monday morning — that's the right question, and the answer is specific to what you find when you open the hood. The architecture is here. The implementation depends on what's underneath yours.

One more thing. This series was written with AI. Not by AI — with it. Every article was built the same way the framework above describes: a human with thirty years of experience and instincts shaped by a score of organizations, working with a tool that could draft, research, and iterate at a speed no human can match. The constraints were mine — the editorial judgment, the "that's not quite right," the experience that knows what a reorganization feels like in the hallway, not just on the slide. The capability was the tool's. And the output is what you just read.

That's not a disclosure. It's the point. If this series landed — if it named something you've felt but couldn't quite articulate — that's what AI looks like when the operating system underneath it works. This is a small-scale example — one person, one tool, tight feedback loops. Enterprise deployment is a harder problem. But the principle is the same: human judgment shaping machine capability toward a specific outcome, with clear roles, clear ownership, and a feedback loop tight enough to catch the moment something drifted off course. The constraints channeled the capability. The capability amplified the constraints. That's the model. Not just for writing a series of articles — for everything your organization is about to attempt with AI.